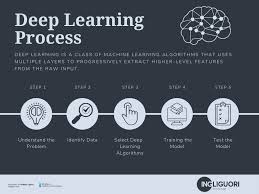

Deep learning has become the backbone of modern AI — powering applications from voice assistants to medical imaging. But when it comes to interviews, many data scientists struggle to explain the wide range of neural network architectures clearly and concisely.

This blog breaks down 20+ essential deep learning models into simple, interview-friendly explanations. Think of it as your quick-fire guide to making complex architectures sound natural.

1. Perceptron – The Starting Point

The perceptron is the simplest form of a neural network: it takes weighted inputs, applies an activation function, and outputs a decision. Great for understanding the “neuron” building block of all networks.

2. Multilayer Perceptron (MLP) – Adding Depth

By stacking perceptrons in multiple layers, MLPs can learn non-linear patterns. They’re versatile, forming the backbone of many predictive models.

3. Convolutional Neural Networks (CNNs) – Seeing Patterns

CNNs shine in image and video processing. Convolutions act like filters, scanning for edges, textures, and shapes, making them ideal for vision tasks.

4. Recurrent Neural Networks (RNNs) – Learning Sequences

RNNs handle sequential data by remembering previous inputs. Think of them as storytellers that carry context across sentences or time steps.

5. Long Short-Term Memory (LSTM) – Memory Made Smarter

A special type of RNN that overcomes vanishing gradients. LSTMs “decide” what information to keep or forget, making them powerful for language modeling and time-series forecasting.

6. Gated Recurrent Unit (GRU) – Efficient RNNs

Like LSTMs, but simpler and faster. GRUs are popular when training time and resources are limited.

7. Autoencoders – Data Compressors

Autoencoders learn to compress input into a smaller representation and then reconstruct it. Useful for dimensionality reduction, denoising, and anomaly detection.

8. Variational Autoencoders (VAEs) – Creative Generators

VAEs extend autoencoders by learning probability distributions, enabling them to generate new, realistic data points — from images to molecules.

9. Generative Adversarial Networks (GANs) – The Creative Rivalry

GANs use two competing networks (generator vs. discriminator) to create hyper-realistic data. They’ve transformed art, gaming, and synthetic data creation.

10. Residual Networks (ResNet) – Solving Deep Network Woes

ResNets use skip connections to prevent vanishing gradients, allowing very deep networks to train effectively. They dominate image recognition benchmarks.

11. Transformer Models – Attention is All You Need

Transformers revolutionized NLP by replacing recurrence with attention. They focus on relationships between all words in a sequence simultaneously, powering models like BERT and GPT.

12. Attention Mechanisms – The Spotlight Effect

Attention tells a model where to focus. Instead of treating every input equally, it highlights the most relevant parts, like humans concentrating on keywords in a sentence.

13. U-Net – Precision in Medical Imaging

Designed for image segmentation, U-Net is widely used in healthcare, where accurate pixel-level predictions matter (e.g., tumor detection).

14. Capsule Networks – Beyond CNNs

Capsule networks aim to capture spatial relationships more effectively than CNNs, recognizing objects even when rotated or skewed.

15. Graph Neural Networks (GNNs) – Learning from Connections

GNNs process graph-structured data, like social networks or drug interactions. They model relationships, not just features.

16. Deep Belief Networks (DBNs) – The Early Stacker

Once popular, DBNs stacked restricted Boltzmann machines to learn hierarchical representations. They’ve since been overshadowed by newer models but remain historically important.

17. Restricted Boltzmann Machines (RBMs) – Building Blocks

RBMs are energy-based models that learn patterns in data. They laid groundwork for deep architectures like DBNs.

18. Deep Q-Networks (DQN) – Learning Through Rewards

In reinforcement learning, DQNs combine Q-learning with deep networks to master tasks like playing Atari games directly from pixels.

19. Siamese Networks – Finding Similarity

These networks compare inputs (e.g., face verification). By learning embeddings, they measure similarity rather than classification.

20. Neural Turing Machines – Networks with Memory

NTMs combine neural networks with external memory, mimicking the way computers store and retrieve information. They’re powerful but complex.

Source: https://medium.com/data-science-collective/deep-learning-simplified-how-to-explain-20-models-in-an-interview-bc55f99d16fe